Transformer Architecture: Its Role in ChatGPT

Admin

Admin

We’ve all heard of ChatGPT by now. It’s an AI tool that can answer questions, have conversations, and even write stories. But have you ever wondered how it works? The secret behind ChatGPT is a powerful technology called Transformers.

These models have transformed how computers process and generate human language. From basic neural networks to the more advanced generative transformer-based architecture, we'll walk through the key concepts and innovations that made ChatGPT possible.

By the end of this article, you’ll have a solid understanding of the following:

- Feed-Forward Neural Networks The foundation of many AI models.

- Recurrent Neural Networks (RNNs) How machines handle sequential data.

- Long Short-Term Memory (LSTM) A type of RNN that can remember longer sequences.

- Transformer Models The generative transformer-based architecture of a ChatGPT that revolutionized AI language models.

- Attention Mechanisms The secret source of transformers that helps them understand context.

- The Power of Large Language Models (LLMs) How transformers scaled up AI language capabilities.

- ChatGPT and its Generative Pre-training How ChatGPT was built and trained using transformers.

Let’s start with some basics.

Machine Learning: The Core Idea

At its heart, machine learning is about making predictions. Imagine you have a function F that takes some input X and outputs Y. Typically, we know the function and the input, and we calculate the output. For instance, if F(X) = 3X + 2, and X = 5, then Y equals 17. Easy, right?

But in machine learning, things flip. We’re given pairs of X and Y, but we don’t know the function. Our goal? Figure out the function that best fits the data. For instance, you might get pairs like (X = 0, Y = 2), (X = 1, Y = 5), and so on. With enough data, you’d guess that the function is something like F(X) = 3X + 2. But often, the relationship isn’t that straightforward.

That’s why we need neural networks to help us out.

Neural Networks: How Machines “Learn”

Artificial Neural Networks (ANNs) are inspired by the neurons in our brains. These networks are made up of layers of artificial neurons, connected in a way that allows them to “learn” patterns from data. Each connection between neurons has a weight, which determines how much one neuron influences another. These weights are what the network adjusts during training. More neurons and connections usually mean the network can learn more complex things.

But let’s break it down.

In a feed-forward network, data moves straight through layers without looping back. The first layer receives input, and the final layer outputs the result. Each layer applies a set of weights to the data, transforming it. The problem? Feed-forward networks can’t handle sequences of data, like sentences. They treat each input separately, without remembering what came before.

That’s where recurrent neural networks (RNNs) come in.

Recurrent Neural Networks (RNNs): Handling Sequences

RNNs were designed to process sequences. This makes them ideal for tasks like language translation, where the order of words matters. In an RNN, the output of one-step feeds back into the network for the next step. Think of it like remembering each word in a sentence as you process the next one.

For example, imagine tracking how many times an API gets called per second. If you want to raise an alert when calls exceed 1,000 per second, you wouldn’t want to alert on every little spike. You’d calculate an average over time, checking if those average stays high.

RNNs allow for this kind of repeated calculation over time. They pass along information from each step to the next. However, RNNs have a major flaw: they forget things quickly. If something important happens far back in a sequence, an RNN might not remember it by the end.

This is why LSTM networks were developed.

Long Short-Term Memory (LSTM) Networks: Remembering More

LSTM networks solve the problem of short memory in RNNs. They’re a special type of RNN that can remember information for longer periods. They achieve this by using a memory cell that keeps track of information over time. LSTMs decide which information to keep and which to discard, based on gates that control the flow of data.

Let’s say you’re monitoring an API, but now you want to alert when there are zero calls for five consecutive seconds. An RNN might struggle to remember calls from five seconds ago, but an LSTM can handle it. It can keep track of a sliding window of the last five values and alert when the total is zero.

This ability to hold onto information for longer makes LSTMs great for things like language translation, where you need to understand how words relate to each other over long distances in a sentence.

But even LSTMs have limits. They still process data step by step, which can slow things down. That’s where transformers come into play.

Transformers: A Game-Changer in AI

In 2017, researchers at Google introduced the transformer model in a paper titled, Attention is All You Need. The transformer architecture has completely changed how we approach machine learning tasks, especially those related to language.

Here’s what makes transformers so special:

- They process data in parallel Unlike RNNs, transformers don’t process sequences one step at a time. Instead, they look at the entire sequence all at once. This makes them much faster and more efficient.

- They use self-attention The transformer model uses a mechanism called self-attention,which allows it to focus on different parts of a sentence at the same time. This is crucial for understanding context. For example, in the sentence, “The cat sat on the mat because it was raining,” self-attention helps the model figure out that “it” refers to the weather, not the mat.

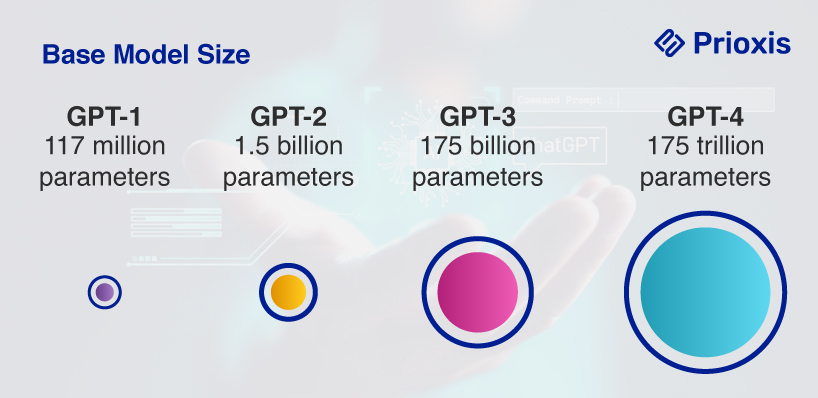

- They scale well Transformers can be trained on huge datasets. That’s how models like GPT-3, which has 175 billion parameters, can perform impressive tasks.

Let’s dive deeper into the self-attention mechanism that powers transformers.

Self-Attention: The Key to Transformers

The self-attention mechanism allows transformers to understand relationships between different parts of a sentence. It does this by calculating three things for each word:

- A Query Vector This stands for the word we’re focusing on.

- A Key Vector This helps the model figure out how much attention each word deserves.

- A Value Vector This holds the actual information the model needs to process.

By comparing the query and key vectors, the model decides how much attention each word should get. For example, in the sentence, “The boy threw the ball,” the word “threw” is most related to “ball.” The attention mechanism helps the model figure this out.

The result? The transformer model doesn’t process each word individually. It understands how words relate to each other, allowing it to grasp the meaning of complex sentences. This attention mechanism is repeated across many layers, enabling transformers to understand language in a much more nuanced way.

How ChatGPT transformer is Built

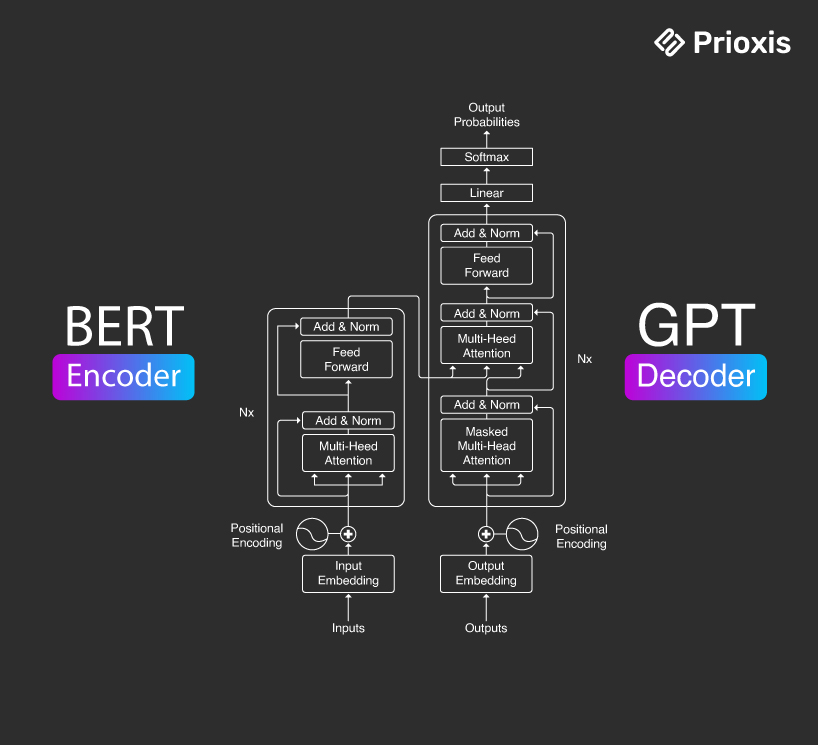

A transformer is made up of two main parts: the encoder and the decoder.

Encoder

The encoder takes in the input sequence and creates a set of vectors that represent the input’s meaning. For example, if the input is a sentence, the encoder turns it into a series of vectors that represent the meaning of each word.

Decoder

The decoder takes these vectors and generates the output sequence, like translating the sentence into another language.

Both the encoder and decoder are made up of multiple layers, each with their own attention mechanism. This allows the transformer to understand complex relationships between words in both the input and output sequences.

Large Language Models (LLMs): Scaling Transformers

One of the biggest reasons ChatGPT transformers have become so powerful is their ability to scale. By training transformers on vast amounts of data, researchers have created Large Language Models (LLMs) solutions like GPT-3 and ChatGPT.

LLMs can handle a wide range of language tasks, from answering questions to generating human-like text. These models are trained on billions of words, allowing them to understand the intricacies of language.

Let’s break down the acronym GPT

- Generative GPT models can generate new text based on what they’ve learned. That’s why ChatGPT can respond to questions, write stories, and even create code.

- Pre-trained GPT models are trained on massive datasets before they’re fine-tuned for specific tasks. This pre-training helps the model understand language patterns across a wide range of topics.

- Transformer At the heart of ChatGPT architecture is the transformer, which enables it to process language with incredible speed and accuracy.

ChatGPT: Putting Transformers to Work

ChatGPT is a great example of how transformers can be used in real-world applications. It uses the GPT-3 model, which has been trained on diverse text sources, to understand and generate responses to user inputs.

Here’s a quick breakdown of how ChatGPT architecture works:

- Input You type a question or statement. For example, “What is the capital of France?”

- Processing ChatGPT uses its knowledge of language to break down your question. It identifies the key word, "France," and understands that you’re asking for the capital.

- Response Based on its training, ChatGPT knows that the capital of France is Paris,so it generates the answer.

The transformer’s ability to understand the relationships between words helps ChatGPT generate responses that feel natural and relevant.

Why ChatGPT transformer Are Better Than RNNs and LSTMs

So, why is generative transformer-based architecture so much better than RNNs and LSTMs for language tasks? Here are the key reasons:

- Parallel processing Transformers don’t have to process sequences one step at a time. This means they can handle much longer sequences and do it much faster.

- Long-term memory Transformers don’t have the same memory limitations as RNN. Because they use self-attention, they can remember relationships between words even if they’re far apart in a sentence.

- Scalability Generative transformer-based architecture is highly scalable, meaning they can handle huge datasets and complex tasks like language translation, text generation, and question-answering.

Explore Further: Chat GPT 4 vs Perplexity

ChatGPT-4: How It’s Different

ChatGPT-4 is bigger and smarter, with trillions of parameters. This extra size helps ChatGPT-4 pick up on much more detail and answer questions more accurately.

But size isn’t the only reason ChatGPT-4 is special. It also uses smarter techniques to understand language better and respond faster.

Handling More Complex Conversations

One big improvement in ChatGPT-4 is how well it handles long conversations. In older versions, like GPT-3, the model sometimes "forgot" parts of the conversation if it went on too long. But ChatGPT-4 uses better methods to remember more information over longer periods, so it can respond more intelligently even after many messages.

For example, if you’re chatting with ChatGPT-4 and reference something you said 10 questions ago, it will have a much better chance of understanding and responding correctly.

Faster and Smarter with Sparse Attention

ChatGPT-4 also uses something called sparse attention, which means it doesn’t need to focus on every single word in a sentence equally. Instead, it can focus more on the important words. This makes it faster and helps it respond better without using too much computer power.

Multimodal Capabilities: Not Just Text

While earlier versions of ChatGPT only worked with text, ChatGPT-4 is capable of handling multiple types of information—like text, images, and even sounds. This means it’s not just limited to chatting. In the future, it could help analyze pictures or audio clips, making it useful for all kinds of tasks.

Imagine being able to ask ChatGPT-4 about a picture you upload, and it tells you exactly what’s happening in the image. That’s where this technology is heading.

Conclusion

In simpler terms, ChatGPT transformer is a type of AI technology introduced by Google in 2017. It changed the way computers understand and process information, like language.

They’re faster and better than older models because they can look at all the words or data at once, instead of one at a time. This makes them super powerful and able to do things like run ChatGPT, create images, and even help in scientific areas like biology.

ChatGPT offer several benefits but ChatGPT transformer isn't perfect. They use a lot of computing power and struggle with long pieces of information.

That's why scientists are already working on newer models that might be even faster and smarter. While generative transformer-based architecture is the best right now, technology moves quickly, and something new could take their place soon.