AI Solutions Architect: Role, Skills & How to Become One

Admin

Admin

Updated: 09 Apr, 2026

Updated: 09 Apr, 2026

Table of Content

Anubhav Dwivedi

Anubhav is a Senior Content Writer with over 5 years of experience in creating impactful content strategies for B2B technology brands, specializing in SaaS, cloud computing, AI, and digital transformation.

"A blueprint is only as valuable as the builder who can bring it to life."

A model design is just a blueprint. On its own, it doesn’t do much. What makes the difference is the person who can take that design and build it into a system that actually works. That’s the role of an AI Solutions Architect. Without them, algorithms stay as nice ideas on paper, never quite surviving the mess of real-world systems.

Just as an urban planner designs a city to serve millions of people, an AI Solutions Architect considers not only what is possible but also what is sustainable during AI software development.

They anticipate traffic bottlenecks in data pipelines, design systems that can expand without collapsing, and ensure every part from model training to user interfaces works in harmony.

The rise of enterprise AI has made this role essential. At Prioxis, we help businesses turn AI ideas into working systems by connecting technical complexity with strategic goals. We make sure AI isn’t just deployed, but deployed with purpose and impact.

Every successful AI system starts with the right blueprint. Let Prioxis design yours.

What is an AI Solutions Architect?

An AI Solutions Architect is the person who connects business goals to workable AI systems. They decide how artificial intelligence will fit into a company’s existing technology, what it needs to deliver, and how it will be maintained.

The job is part strategist, part engineer. One day they might be mapping out an AI infrastructure for real-time fraud detection in a bank. Next, they could be defining an AI systems design that lets a retailer forecast demand and adjust supply chains automatically.

Unlike data scientists who focus on models, or software engineers who build features, the AI architect looks at the full picture like data pipelines, model deployment, integration of AI with in cloud platforms, compliance with industry rules, and how all of it will scale when usage grows.

In short, they make sure AI solutions are not just accurate in the lab but reliable, secure, and cost-effective in the real world.

Responsibilities of an AI Architect

An AI Solutions Architect bridges the gap between business objectives and technical execution. Their role involves orchestrating complex AI systems so they are reliable, scalable, and aligned with enterprise goals. The responsibilities include:

- Defining AI Solution Architecture

Establish a complete blueprint that covers data pipelines, model selection, training environments, deployment workflows, and integration with existing systems.

- Selecting the Right AI Frameworks and Tools

Evaluate frameworks such as TensorFlow, PyTorch, or Hugging Face, and choose based on scalability, performance benchmarks, and compatibility with enterprise infrastructure.

- Designing Data Acquisition and Pre-processing Strategies

Define how raw data is collected, cleaned, normalized, and stored to ensure AI models are trained on accurate and high-quality datasets.

- Overseeing Model Lifecycle Management

Manage the entire lifecycle from experimentation to production, including continuous training, version control, and rollback mechanisms when performance degrades.

- Ensuring Scalability of AI Systems

Plan architectures that can handle increased workloads by using distributed training, parallel processing, and cloud-native deployment models.

- Embedding Security and Compliance Measures

Implement access controls, encryption, anonymization techniques, and compliance with regulations such as GDPR or HIPAA, depending on industry requirements.

- Collaborating with Multidisciplinary Teams

Work with data scientists, DevOps engineers, product managers, and domain experts to ensure the AI solution meets both technical and business needs.

- Monitoring Performance and Optimizing Models

Use monitoring tools and A/B testing to track model accuracy, latency, and resource usage, making iterative improvements to maintain relevance and reliability.

- Driving Innovation and Technology Adoption

Research emerging AI trends, evaluate their viability and impact on business transformation, and adopt technologies that enhance efficiency or unlock new growth opportunities.

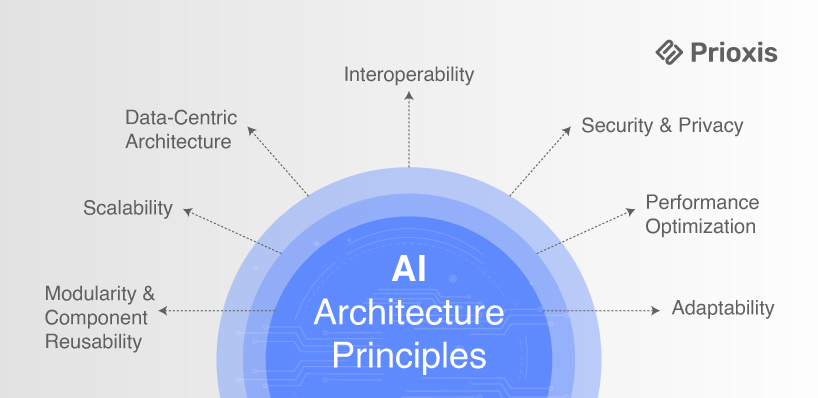

Principles Behind Artificial Intelligence Architecture

A robust AI architecture is more than just connecting algorithms to data. It is a structured ecosystem of components, processes, and governance measures that ensure the solution works reliably under real-world conditions. The following principles form the backbone of effective artificial intelligence systems design.

1. Modularity and Component Reusability

Breaking the AI solution into independent, replaceable components ensures faster updates, easier maintenance, and flexibility in scaling.

Best Practice

- Create separate modules for data ingestion, pre-processing, model training, inference, and monitoring

- Use standardized APIs between modules so each can be updated independently

Example

An AI system uses a modular setup where the ranking algorithm is isolated from the user interface and data ingestion layers. When a new ranking algorithm based on deep learning outperforms the old one, the team swaps only the ranking service without touching the frontend or retraining unrelated models. This avoids downtime and keeps deployment cycles short.

2. Scalability by Design

AI systems must handle everything from low-traffic pilot runs to high-demand production workloads.

Best Practice

- Implement a microservices architecture with container orchestration tools like Kubernetes

- Design for horizontal scaling, so additional instances can be spun up automatically based on load

Example

An AI fraud detection platform for a global bank experiences sharp transaction spikes during holiday sales. The architecture automatically scales inference microservices during these peaks, while the data ingestion pipeline continues processing historical data at a steady pace. This prevents bottlenecks without overprovisioning resources.

3. Data-Centric Architecture

The reliability of an AI system depends on how data is collected, processed, stored, and governed.

Best Practice

- Maintain a unified data lake or warehouse with version control and lineage tracking

- Automate cleansing, anomaly detection, and labelling pipelines

Example

A medical diagnostics AI system ingests imaging data from multiple hospital branches. Before model training, all images are anonymized, standardized in format, and run through a quality scoring system that flags poor-quality scans. The result is a high-integrity dataset that complies with HIPAA while improving diagnostic accuracy.

4. Interoperability Across Platforms

AI should integrate smoothly with enterprise systems, third-party APIs, and cloud environments.

Best Practice

- Use open model formats such as ONNX to ensure portability

- Build APIs (REST or GraphQL) for easy connection to web, mobile, and desktop applications

Example

A predictive maintenance AI for a manufacturing plant sends alerts to an SAP ERP dashboard, a Microsoft Teams channel for supervisors, and a mobile app used by on-site engineers. This is achieved without writing separate integrations for each platform, thanks to a unified API layer.

5. Security and Privacy by Default

Security should be designed into the system, not patched on at the end.

Best Practice

- Encrypt data both in transit and at rest

- Apply fine-grained, role-based access controls and maintain detailed audit logs

Example

An AI portfolio management system processes sensitive investment portfolios. All computation happens within secure enclaves, ensuring that neither developers nor cloud providers can see the raw client data. Access to model outputs is logged and reviewed weekly to detect any unauthorized queries.

6. Continuous Learning and Monitoring

AI systems should adapt to changing conditions without degrading performance.

Best Practice

- Deploy MLOps pipelines with automated retraining triggers

- Monitor for concept drift and track accuracy, latency, and resource usage over time

Example

A retail demand forecasting AI retrains itself every month using the latest sales, seasonal, and promotion data. If the monitoring system detects an unusual drop in accuracy, it alerts the operations team to investigate changes in customer behaviour, supply chain disruptions, or promotional anomalies.

7. Performance Optimization and Cost Efficiency

AI must deliver results quickly while using resources efficiently.

Best Practice

- Apply hardware acceleration using GPUs or TPUs for model training

- Use techniques like quantization, pruning, and knowledge distillation for inference speedups

Example

A voice-enabled customer service assistant runs on low-power edge devices in retail kiosks. By quantizing the speech recognition model and pruning unused layers, the system achieves sub-200ms response times while reducing inference costs by 40 percent.

8. Adaptability for Future Innovations

The architecture should be ready for new algorithms, frameworks, and hardware advancements.

Best Practice

- Keep dependencies modular and avoid locking the system to one vendor

- Implement flexible pipelines that can accommodate new data types or model formats

Example

Intelligent document processing originally built on TensorFlow can adopt a new transformer-based NLP model trained in PyTorch. Because the architecture uses ONNX as an intermediate format and containerized deployments, the team can swap the model in production without rewriting inference code or retraining unrelated systems.

Foundations of AI Systems Design

Strong AI systems are built the same way strong buildings are: on well-engineered foundations. This foundation is a mix of clear objectives, reliable data, the right architecture, and the ability to evolve as new challenges appear.

Think of the design process as a chain. Every link must hold because a weak one will bring down the whole system.

- Start With Purpose

An AI project that begins with a vague idea is already on shaky ground. The first step is defining the exact problem and what success looks like in measurable terms. Instead of saying “improve customer service,” set a target such as reducing average support ticket resolution time by 30 percent while keeping customer satisfaction above 90.

- Use Clean, Relevant Data

Data is the raw material of artificial intelligence architecture. Quantity matters, but quality matters more. Data from multiple sources needs to be standardized, cleaned, and checked for bias before it can power any model. For example, a supply chain AI might need data from warehouse logs, GPS trackers, and vendor schedules, all formatted in a way the model can process.

- Pick the Right Approach

Not every challenge needs a deep neural network. Sometimes a well-tuned regression model delivers better results with less complexity. The architecture depends on the task, the computing environment, and the volume of data. A machine learning architect will balance performance, scalability, and maintainability at this stage.

- Test, Train, and Iterate

Training is not a one-time step. It is a cycle of testing, evaluation, and refinement until the model performs consistently across different scenarios. The goal is to ensure it works just as well on unseen data as it does in development.

- Deploy into Real World

An AI model in a lab is one thing. An AI system running inside the unpredictable environment of an enterprise is another. Good AI systems design ensures the model integrates with existing workflows and technology stacks, whether that involves a cloud AI architect setting up serverless endpoints or a retail platform embedding predictions into its POS software.

- Monitor and Maintain

Once deployed, AI systems need ongoing attention. Models drift, data changes, and business priorities shift. Continuous monitoring, retraining, and optimization keep the system relevant and effective over time.

How to Execute a Scalable AI Implementation

Putting AI into a business is one thing. Making sure it still works when more people, more data, and new needs come in is another. To get there, you have to plan for scale from the start. Here’s what that looks like in practice.

- Start With Clear Goals Be specific about what you want AI to achieve. Whether it is reducing customer wait times, improving sales forecasting, or automating reports, having a clear target helps you measure success.

- Get Your Data Ready AI works best when it has good data to learn from. Gather the information you already have, fill in gaps, and make sure it is accurate and up to date.

- Plan the Scalability Think about how your AI will handle more users, more data, or new features in the future. A good setup now saves time and money later.

- Pick the Right Tools Choose the software and platforms that match your goals and budget. The right tools make your AI faster to build and easier to maintain.

- Start Small Start with a small version of your AI that solves one part of the problem. This lets you see how it works and make changes before going bigger.

- Data Automation Set up a process where new data comes in and is ready for your AI to use without manual work. This keeps your AI fresh and accurate.

- Train & Test Model Teach your AI with the data you have, then test it to see how well it works. Make changes until it performs reliably.

- Make Sure it is Fair and Accurate Check for mistakes or bias that could cause problems. Test it in different situations to see how it performs in the real world.

- Launch in Stages Roll out your AI to a small group of users first. If everything runs smoothly, expand it to more people.

- Watch and Improve Analyze how your AI performs once it is live. Update it regularly so it stays accurate and useful.

- Plan for Long Term Put a process in place for updates, improvements, and new features. That way your AI stays valuable as your business grows.

Efficient Enterprise AI, Build to Scale

Enterprise AI delivers value when it solves a high-impact problem and is designed to grow with the business. Start by targeting a use case with measurable results, such as predicting product demand or optimizing delivery schedules.

Build on modular, cloud-ready architecture so the system can handle more data, users, and features without a full rebuild. Ensure smooth integration with existing tools so teams can adopt it quickly.

Protect sensitive data from the start and meet all compliance requirements. Keep improving through regular monitoring, retraining, and feature updates so the AI remains accurate and relevant.

When planned this way, enterprise AI becomes a long-term asset that drives both efficiency and growth.

Design the Right AI Infrastructure

Before an AI model can work well, it needs the right setup behind it. The infrastructure decides how fast it runs, how much it can grow, and how reliable it will be.

- Start with the Model’s Demands

Your infrastructure should follow the AI workload, not the other way around. A computer vision model that processes 60 frames per second demands GPUs with low-latency memory access, while a fraud detection system with millions of small transactions may require CPU-heavy parallel processing and fast I/O rather than GPU acceleration.

- Weigh Cloud, Hybrid, and On-Premise by Risk and Scale

Cloud offers rapid scaling and access to specialized hardware like TPUs, but recurring costs can balloon for 24/7 inference workloads. Hybrid setups are effective when sensitive data must remain in a controlled environment while training happens in the cloud. On-premise can be more cost-effective for stable, predictable workloads but comes with higher upfront capital and slower scalability.

- Architect Data Flow for Speed and Integrity

Bottlenecks often occur before the model even runs. Streaming architectures with tools like Kafka or Kinesis can deliver data in real time, while batch pipelines suit periodic retraining. Implement data validation at the ingestion stage to prevent garbage inputs from degrading model accuracy.

- Build in Resilience from Day One

Distributed clusters with failover nodes, replicated storage, and automated load balancing prevent outages during peak demand. Without this, a single-point failure in a data centre or network can freeze inference across applications that depend on the AI.

A well-designed AI infrastructure is about matching resources precisely to workloads, anticipating scaling patterns, and protecting the system from data and operational risks.

Role of a Cloud AI Architect in Modern Deployments

A successful AI system is rarely the product of algorithms alone. It depends on the environment that supports it. Just as a city needs roads, utilities, and zoning before buildings can thrive, AI needs the right cloud infrastructure before it can deliver value. The cloud AI architect is the one who designs and maintains that environment.

In practice, this role means:

- Selecting cloud service models and compute resources that align with the model’s training and inference needs while avoiding waste.

- Designing secure, reliable data pipelines so information flows seamlessly from source to model to application.

- Ensuring the system meets compliance standards for data privacy and industry regulations.

- Planning for scalability so the solution performs consistently as data volumes and user demand increase.

- Continuously optimizing configurations to balance cost, performance, and sustainability.

A strong cloud AI architect bridges two worlds: the technical precision of infrastructure engineering and the strategic vision needed to make AI reliable, scalable, and ready for real-world impact.

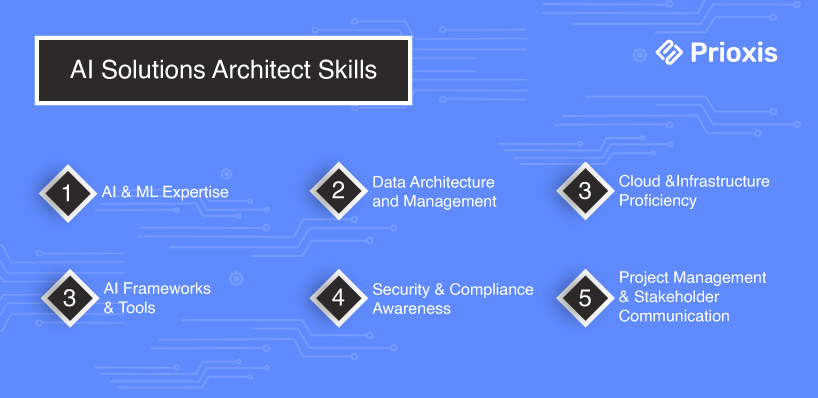

Skills Required to Become an AI Solutions Architect

An AI solutions architect is rarely just “building models.” They are designing ecosystems where data, infrastructure, and algorithms work together. The skill set is broad, spanning deep technical expertise and the ability to align AI capabilities with strategic business goals.

- AI and Machine Learning Expertise

A deep understanding of algorithms, model training techniques, and evaluation methods. This includes knowing when to use supervised learning, reinforcement learning, or other approaches based on the problem at hand.

- Data Architecture and Management

The ability to design systems that collect, clean, store, and serve high-quality data. Strong skills in database technologies, data lakes, and pipelines are essential for ensuring AI models have reliable input.

- Cloud and Infrastructure Proficiency

Familiarity with leading cloud platforms such as AWS, Azure, or Google Cloud, including their AI and analytics services. This covers containerization, orchestration, and resource scaling to support large-scale AI workloads.

- AI Frameworks and Tools

Hands-on experience with frameworks like TensorFlow, PyTorch, or Scikit-learn, as well as tools for experiment tracking, model deployment, and performance monitoring.

- System Integration Skills

The capability to embed AI models into existing enterprise systems without disrupting critical workflows. This often involves working with APIs, microservices, and message queues.

- Security and Compliance Awareness

Understanding how to secure data in transit and at rest, manage access control, and comply with industry-specific regulations such as GDPR, HIPAA, or PCI DSS.

- Project Management and Stakeholder Communication

Coordinating multi-disciplinary teams, aligning timelines with business priorities, and translating technical requirements into clear language for decision-makers.

- Problem-Solving Mindset

The capacity to think creatively under constraints, anticipate challenges, and adapt solutions when real-world variables shift.

A well-rounded AI solutions architect is as comfortable discussing neural network architectures with data scientists as they are mapping out an implementation roadmap with business leaders. This blend of depth and breadth is what helps them to design AI systems that not only work, but work for the long term.

Certifications That Help You Advance as an AI Architect

Experience may be your best teacher, but certifications are the formal recommendation letter it writes for you. In competitive enterprise AI, they show you can do more than talk about models over coffee about how you can design, deploy, and manage them under real business pressure.

- AWS Certified Machine Learning – Specialty

Demonstrates your ability to design and deploy AI models on Amazon’s cloud, optimize cloud performance, and manage large-scale workloads. Essential for cloud AI architects working in AWS-heavy environments.

- Google Cloud Professional Machine Learning Engineer

Focuses on building, training, and deploying ML models on Google Cloud infrastructure, with a strong emphasis on scalability and security.

- Microsoft Certified: Azure AI Engineer Associate

Covers the integration of Azure AI services into enterprise solutions, including NLP, computer vision, and conversational AI.

- TensorFlow Developer Certificate

Highlights practical skills in building deep learning models using TensorFlow — a strong differentiator for architects who also prototype models.

- Certified Kubernetes Administrator (CKA)

While not AI-specific, it validates your ability to orchestrate scalable AI workloads in containerized environments, a critical skill for production-grade deployments.

- TOGAF® 9 Certification

Proves architectural thinking beyond AI, ensuring you can integrate AI systems into broader enterprise architectures without creating silos.

A strong certification portfolio doesn’t replace hands-on experience, but it does build credibility. For decision-makers, it is a tangible signal that you understand both the technology stack and the capability to lead AI initiatives successfully.

Take the Next Step Toward Becoming an AI Solutions Architect

Conclusion

An AI Solutions Architect is the link between vision and execution. They design the data pipelines automation solutions, choose the right frameworks, plan for scale, and make sure the system can survive the messy reality of enterprise environments. Without this role, AI often stays as a proof of concept that never reaches production.

For enterprises, the challenge is not just building models but building systems that deliver value over time. That is where Prioxis comes in. We help businesses design AI solutions that fit their goals, work with their data, and keep running as needs grow. If you are planning to take AI beyond the lab and into the core of your business, the first step is getting the architecture right.

Get in touch